Researchers at the University of Wisconsin-Madison and the Universidad de Zaragoza in Spain have developed a new kind of virtual camera that appears to be able to see around corners.

The technology pulls from lessons of classical optics and can develop images through barriers making it useful for everything from surgery to defense and disaster relief, said Andreas Velten, lead researcher of the project and assistant professor in the Department of Biostatistics and Medical Informatics at UW-Madison.

“There are a lot of different regions and a lot of different scales that it could be used in and the immediate ones are … (for example) the fire department, if you have a burning building you want to know which of the rooms have people in them,” he said.

News with a little more humanity

WPR’s “Wisconsin Today” newsletter keeps you connected to the state you love without feeling overwhelmed. No paywall. No agenda. No corporate filter.

The study published in the journal Nature comes from a $6 million project funded largely by NASA and the United States military.

The technology could help the military in house-to-house fighting where rates of friendly fire deaths can be high, and NASA could use the system for cave imaging on the moon, Velten said.

Researchers have been studying this technology for years, Velten said. Scientists on this study used lessons from other imaging like ultrasound and gravitational wave imaging, which both use the same underlying theory, to push the idea forward.

“It’s very useful because you can exchange information from one to the other,” he said. “And what we really have done is to point out that, if you can formulate this problem the right way, it also becomes part of the same theory and we can really think of this non-linear side imaging to see around corners of projecting a virtual camera.”

What makes this study different from other research, Velten said, is that it goes beyond imitating a scene that only depicts an object or two, allowing scientists to reconstruct a detailed image of the hidden scene. Previous simple scene reconstruction has prevented more widespread use of the technology.

The study’s findings take into account limitations of existing imaging technology, such as different materials of walls and hidden surfaces, variations in brightness and noisy data.

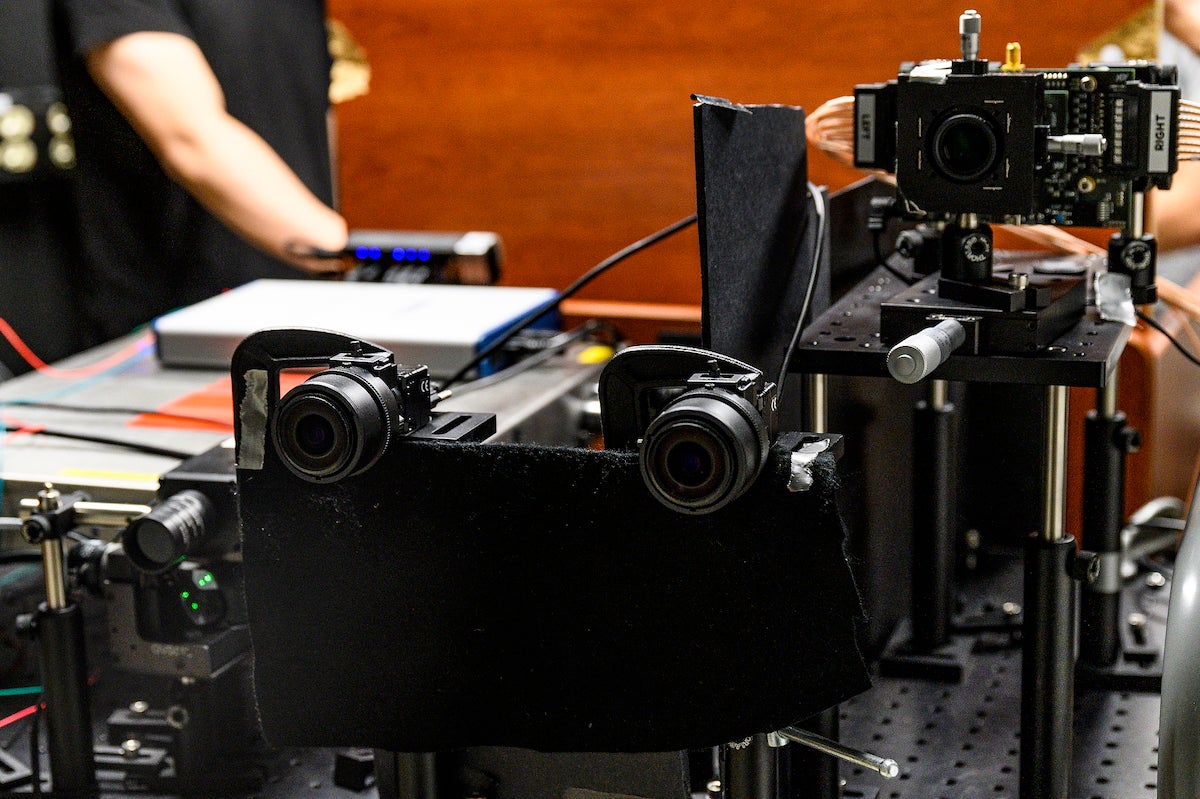

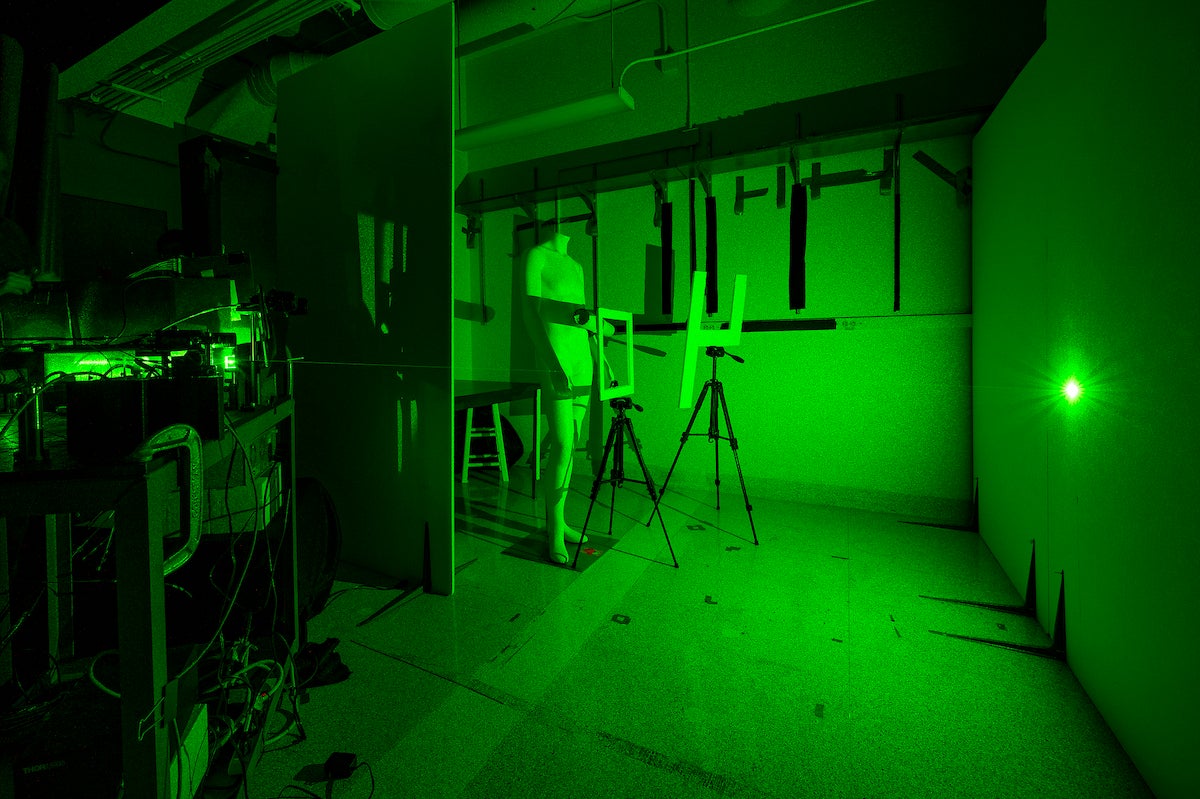

To reconstruct an image, the scientists sent thousands of short laser pulses to a surface (a wall or barrier of some sort), where the light from the laser bounces off that surface to the hidden scene, and then bounces back again. The camera is able to capture the light coming back.

“It’s fast enough to actually see it as a function of time — it measures the light, which is very fast,” Velten said. “And from that we can then reconstruct images of this hidden scene.”

The image is reconstructed using mathematical algorithms that take the data from the camera.

“This reconstruction algorithm is really no different from what you would do if you wanted to reconstruct an image from the light that hits the aperture of your camera — that also has light arriving at different points in the scene, at different times and then you have to reconstruct an image from that,” he said. “So it’s basically the same diffraction math that’s helping the camera reconstruct its images for our eyes.”

At this point, the technique has only been used in the lab. While researchers have only looked at mannequins so far, Velten said they can tell the difference between a person and an animal and make out hand gestures.

There’s also potential for the imaging to produce video of hidden scenes, Velten said, which could happen in a year or so.

Wisconsin Public Radio, © Copyright 2026, Board of Regents of the University of Wisconsin System and Wisconsin Educational Communications Board.